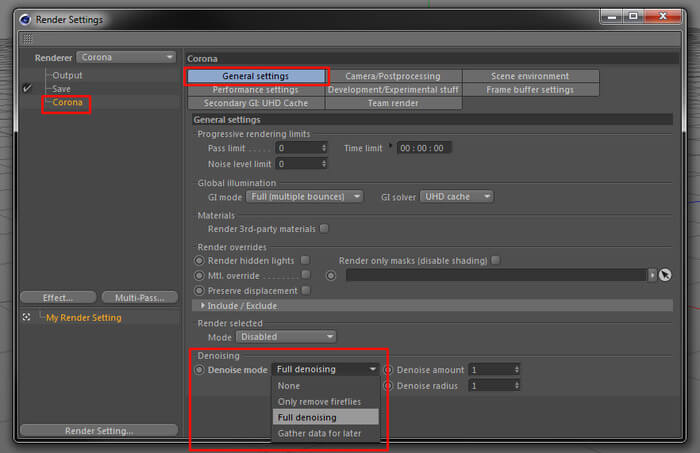

We could even retrain the network using V-Ray renders. It’s possible to use the learned data with V-Ray, even though the information was gathered using Iray renders. How the NVIDIA OptiX denoiser works in V-Ray If you can get a workable noise-free image in real-time, it could have an impact on your workflow, especially during lighting and look development. At the same time, accuracy may not be the most important thing. By definition, it gives you the best guess for what it thinks the final image should be. But let’s keep in mind that a denoised image is never quite going to be accurate. What’s the advantage of NVIDIA’s OptiX denoiser over V-Ray’s denoiser? While the V-Ray denoiser is very fast and can denoise an image in seconds on a GPU, the OptiX solution can denoise a render in real-time. We decided to experiment with how this learned data might benefit V-Ray. They built a neural network using thousands of images rendered in Iray, and this learned data can now be applied to other ray traced images. That’s exactly what NVIDIA has introduced with their OptiX AI-accelerated denoiser. In theory, by feeding the neural network thousands of different noisy renders along with the clean final versions, it could learn how to solve the noise problem using this image data, and then apply the solution to other cases. But because you already know the answer, you can skip counting on your fingers, making it much faster. Imagine if you didn’t know that 5+5=10, and you had to count on your fingers each time, it would be a much slower solution. Once the network better understands how to solve a problem, such as denoising, it can solve it much faster. The idea is to build a computational network that learns how to solve specific problems, either from provided solutions to the problem, or by learning from it’s own tests. How deep the neural network is simply refers to the number of layers that the network contains. Right now, there’s a lot of buzz surrounding the topics of Deep Learning and Deep Neural Networks. But what if V-Ray could learn from other renderings too, not just the one it’s working on? For example, the Adaptive Sampler, Adaptive Lights and new Adaptive Dome Light all use this concept. In V-Ray, it can use the data learned during the light cache pass to help solve a variety of rendering problems much faster. Using this previously “learned” data is the basis of machine learning. What if, instead of solving the denoising problem independently for each image, it could reference back to past denoising solutions to solve the problem faster? With GPUs, we get about a 20x speed boost, and the process can finish in just a few seconds.īut it could be faster. One thing we mention in our Guide to GPU is that GPUs are excellent at massively parallel tasks. It allows the user to render an image up to a certain point and then let V-Ray denoise it based on the info it has. In V-Ray 3.x we introduced our own denoising solution. Technically, Photoshop’s denoiser would work far better than Smart Blur, but this helps illustrate the point. For these images, we used Photoshop’s Smart Blur filter. The second image shows what happens when you apply a simple blur, and the third image shows what happens when you detect edges and blur at the same time. In the example below, we start with a noisy render that has only a few samples. For denoising to improve beyond this is a more difficult problem to solve.

If the denoising solution is also able to detect edges and make sure they remain sharp, the result will be better. But the result will be just an overall blurry image. The simplest denoising solution is to blur all the nearby pixels to get an average. In ray tracing, you can either wait longer to calculate more samples, or add more computing power to resolve the image faster.Īnother thing that can help with noise in an image is called denoising. To get more samples in photography, you can open the aperture or increase the exposure time to let in more photons. In both cases, the solution is to allow for more samples. If you ray trace for only a short time, the samples are limited and the image will be noisy. In photography, when there’s not enough light, the samples are sparse and the overall image will be grainy.

Ray tracing, like photography, needs a lot of light samples to get a clear image, and in both cases noise is always a challenge.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed